Breaking through the limits of photography to produce true-to-life pictures down to the details

Deep Learning Image Processing Technology

Canon has established proprietary deep learning image processing technology that enables corrections to issues inherent to photography

June 22, 2023

Suppresses unavoidable noise and lens blur in photography

Every moment is a slice of time. It won’t occur the same way again, but you can record it with your camera. Whether it was a stunning view you saw for the first time, or a special moment to cherish forever, your camera can capture such scenes and preserve them in photographs.

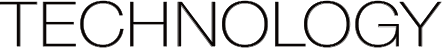

Until recently, however, photography had unavoidable image quality issues. They include, for example, noise that makes a photograph look grainy, moire that causes mottled patterns in the resulting photograph, and image blurring caused by the basic mechanism of the lens. These issues are a result of optical elements causing phenomena, not visible to the naked eye, to appear in photographs. For example, the peripheral areas of a wide-angle lens have lower optical performance than the center part. Therefore, images captured through that part of the lens are more likely to be blurred. This problem could not be prevented even by skilled photographers.

In recent years, AI technology has progressed tremendously, and deep learning1 technology is now being used in many applications. As an industry-leading camera and lens manufacturer with unrivaled expertise in the field, Canon tackled the previously "unsolvable" problems of cameras caused by the principle of photography, by developing its own deep-learning image processing technology.

- 1 Deep learning: A method of machine learning based on artificial neural networks inspired by the human brain. By training a computer using large amounts of data, it can make desirable inferences and decisions based on features derived from that data.

Preparing large amounts of training data crucial for deep learning technology

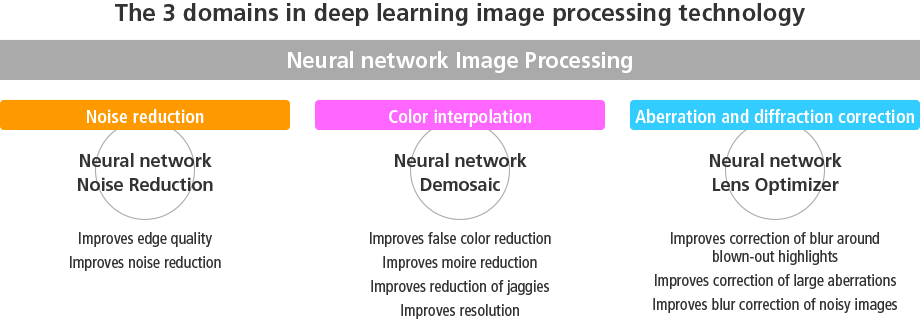

To achieve "true-to-life" photography, Canon used deep learning image technology to tackle three domains that had previously been considered inevitable: noise reduction, color interpolation, and aberration and diffraction correction (the correction of lens blur).

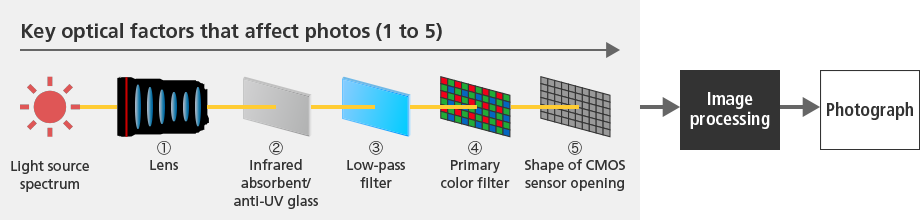

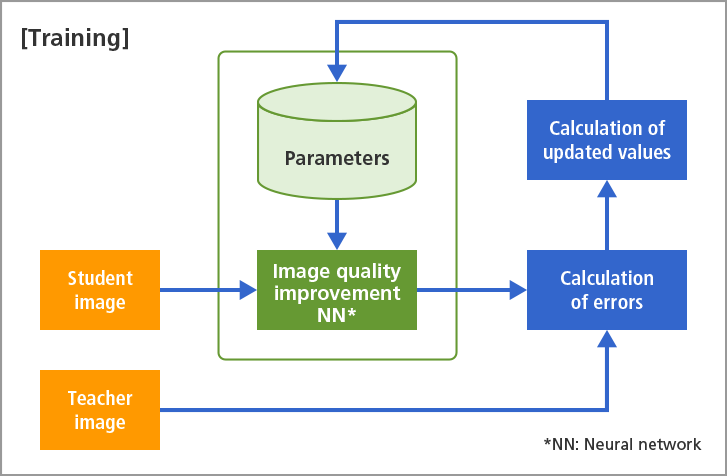

The accuracy of the results of deep-learning image-processing technology depends largely on how much training data, made from pairs comprising a teacher image and a student image, can be prepared.

Through decades of camera and lens development, Canon had accumulated an immense image database of every sort of subject possible. Furthermore, these images are in RAW format, which holds more information than other image file formats like JPEG. Canon was able to prepare a large amount of ideal training data using this image database, while also leveraging its in-depth knowledge as a camera manufacturers about how camera settings affect image quality.

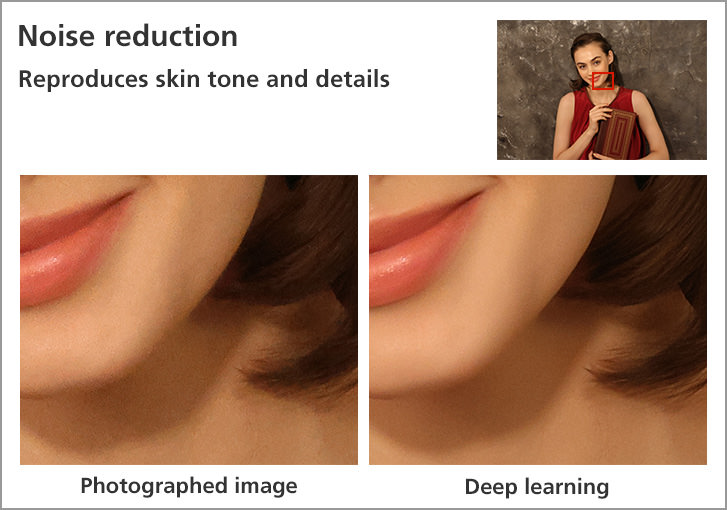

Noise reduction that removes only noise, preserving details

Many noise reduction methods have been developed to date. When they were used, however, not only was the noise erased, but also the details of the subject, and as a result, image quality was often degraded. Thus, there were many requests for improvement from professional photographers. Unable to find a definitive solution, Canon decided to pursue noise-reduction image processing that could produce clear, high-quality images with deep learning, using the large amount of low-degradation images and hard-to-process images it possessed.

But deep learning can't solve every problem. In some shooting scenarios it performs "false corrections," the effects of which are in some cases worse than traditional image processing. To address this formidable challenge, Canon modified the architecture of the neural network, training process, and training data to reflect its own deep knowledge of the noise produced by cameras.

These efforts resulted in the establishment of the Neural network Noise Reduction function, which enables clear, high-quality results. The function suppresses the noise in high-ISO shooting, where noise as well as light signals are amplified as the sensitivity is increased, and it also improves the expression of smooth skin textures (skin tones), which is often damaged by noise.

Skin can now be rendered more smoothly even when shot at a high-ISO.

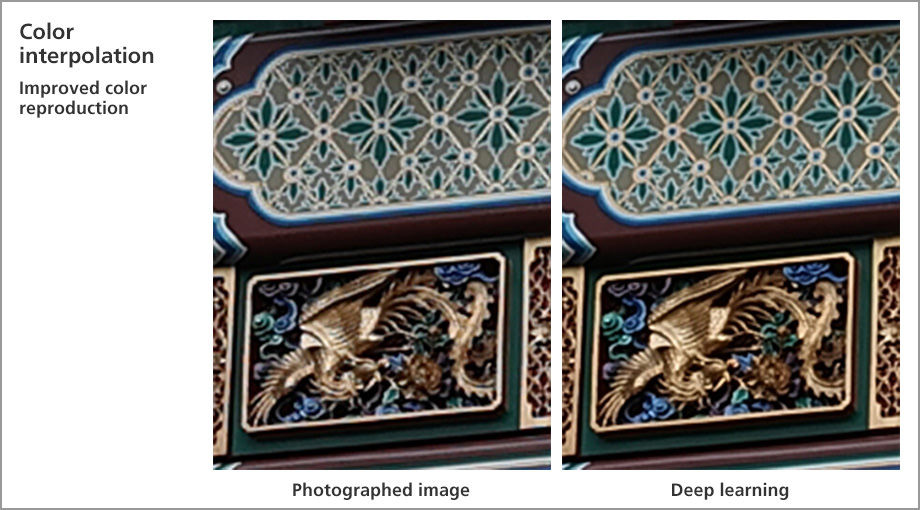

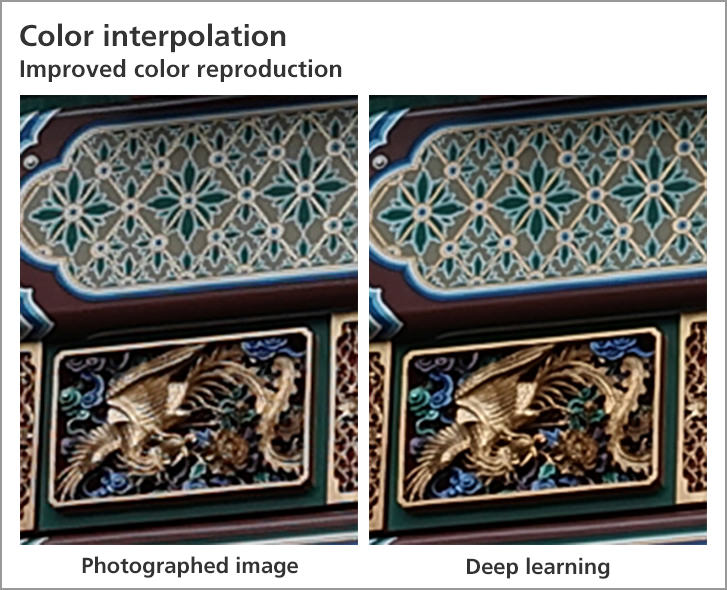

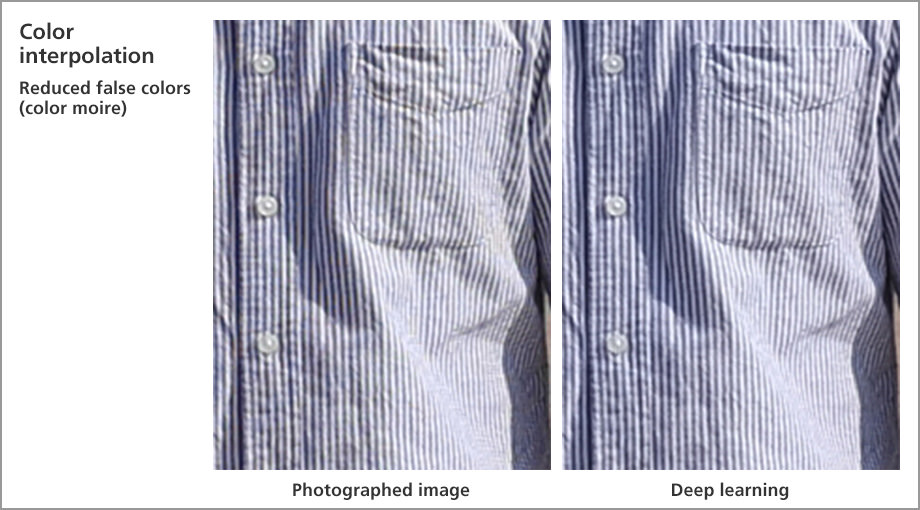

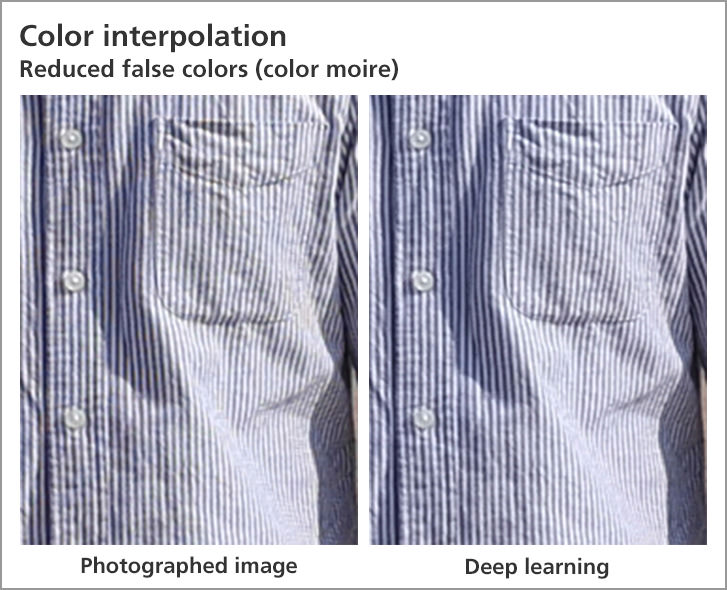

Color interpolation processing: reducing moire and jaggies

Digital cameras have an inherent problem caused by a basic mechanism of digital photography, called moire, which are mottled patterns that appear in captured images when photographing subjects with repeated patterns, such as stripes and checkered patterns. One cause is the regular configuration of the pixels on a digital camera’s image sensor. The other cause is color interpolation.

One pixel in the image sensor generates pixel data by color interpolation that generates a set of the three primary colors of light: red (R), green (G), and blue (B), by detecting only one of them, and inferring the other two colors by referring to the information of surrounding pixels. Canon could not avoid the "false color, or color moire," that does not exist in the actual appearance of the subject and the "jaggies" that cause diagonal lines to look jagged, as both were caused by the basic mechanism of color interpolation. Similar to the issues in noise reduction, many methods of reducing such causes of image quality deterioration have been developed. Unfortunately, resolution perception and color reproduction inadvertently suffered at the same time in this process.

Using its rich image database, Canon established a deep learning image processing technology for color interpolation, which it named “Neural network Demosaic.”

The training dataset for that purpose was carefully constructed, taking into account the visual properties of human vision, which is sensitive to differences in brightness and less sensitive to changes in color.

Aiming to suppress false interpolation, the training data set was centered on the kinds of subjects that are difficult for correct interpolation in the color interpolation process. As a result, accurate interpolation is now possible even for such subjects as striped shirts that are prone to false colors, diagonal lines that are prone to jaggies, and pets that are prone to moire and false colors, thereby improving resolution perception and color reproduction.

Improved color interpolation also improves color reproduction and the resolution of details.

The false colors in the photo of the striped shirt are greatly reduced, improving overall image resolution.

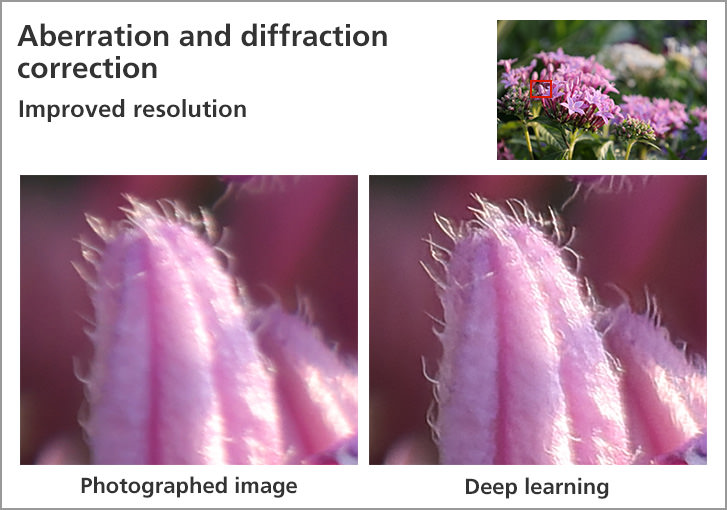

Even lens blur around blown-out highlights are corrected

A lens, the device through which a camera captures light, has theoretically unavoidable optical problems such as aberrations2 and diffraction3 blurring.

High-performance lenses use combinations of concave and convex lens elements, aspherical lens elements, and special materials to minimize aberrations. However, eliminating them completely is theoretically impossible.

Canon’s Neural network Lens Optimizer, which is a deep learning image processing technology that corrects aberrations and diffraction, corrects the deterioration caused by such issues, significantly improving visual resolution.

What kind of aberrations and diffraction blur are there, and how do they happen?

For every lens that Canon produces, it has a complete grasp of the aberrations and diffraction blur of the lens during development and design.

By fully leveraging its simulation technology, Canon therefore could create a large number of high-precision pairs of student images with aberration and diffraction blurring and teacher images without those undesirable effects, based on such product data as design values for each lens model, and used them as training data for deep learning. By using these pairs, Canon succeeded in correcting the various types of blurring that occurred depending on the position of the image. This is especially useful when a user wants to take clear image of landscapes, celestial bodies, and other subjects, even in the periphery of the shooting area. Furthermore, unlike conventional methods, the technology corrects aberration and diffraction blurring without amplifying noise.

- 2 Aberrations: Phenomena associated with the refraction of light in an optical lens. There are several types of aberrations:

Spherical aberration: The spherical shape of the lens prevents light from focusing on a single point.

Chromatic aberration: a phenomenon caused by different refractive indices at different wavelengths of light

Coma aberration: A dot at the periphery of a photograph that appears as a trailing comet.

In addition, there are other aberrations including astigmatism and distortion.

"Blurring," "distortion," and "color shift" of the image occur when the imaging position of the lens deviates from ideal position. - 3 Diffraction blur: Diffraction is a phenomenon in which light propagates in a curved path into the shadow area of an obstacle as it passes through the corner of that obstacle. When shooting with a smaller aperture, the light spreads behind the aperture, impairing the sharpness and contrast of the image.

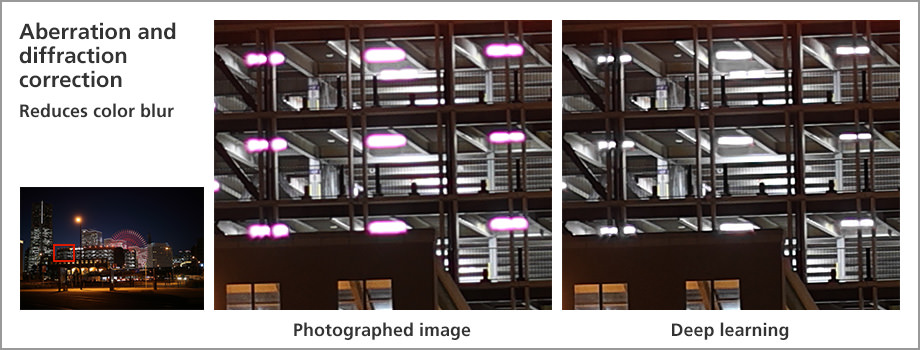

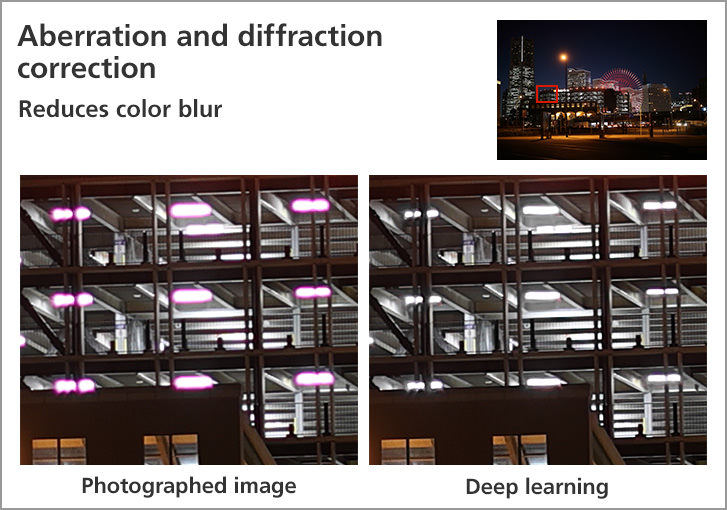

Correcting blur caused by aberration, sharpens the details of subjects.

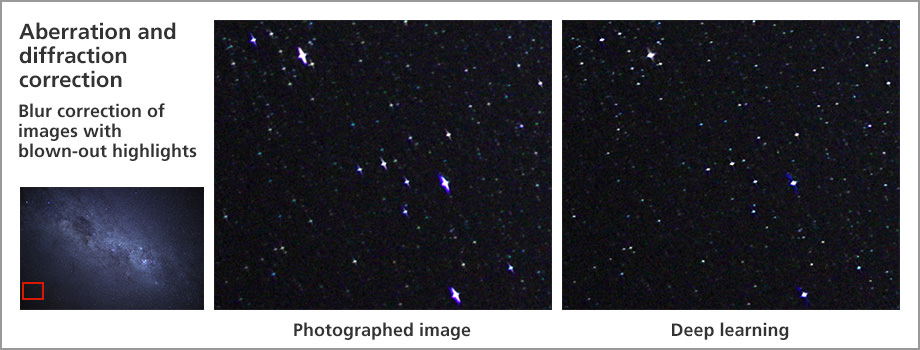

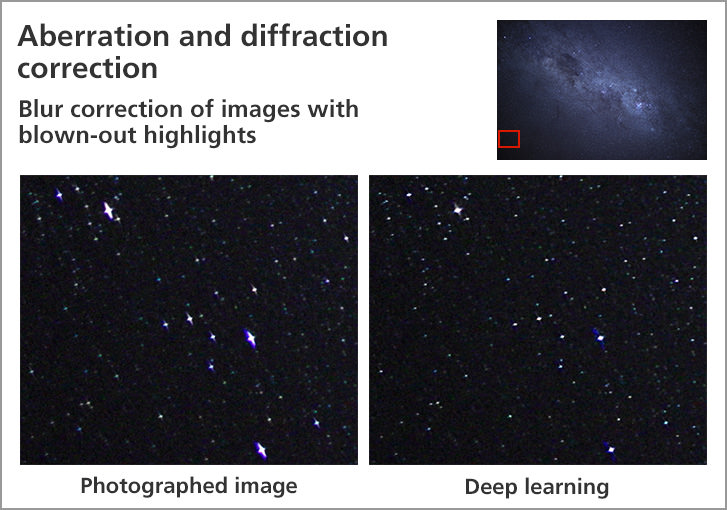

Blurring around blown-out highlights is noticeable, but such blurring is hard to remedy because the information needed for correction processing is lost. However, Canon’s aberration and diffraction correction also works for such blurring. But it was not easy to achieve. When a system is simply trained with training data consisting of images with and without blurring around blown-out highlights, the system may perform erroneous correction. Because little work had been done thus far to address blur correction around blown-out highlights, Canon needed to discover the problems associated with correction and clarify the principles of its occurrence. The company worked on improving training data through such means as using computer graphics and trial-and-error through various methods of architecture and post-processing of neural network. Through these efforts, Canon successfully achieved correction processing that also accurately corrects blurring around blown-out highlights.

By correcting blur around blown-out highlights, the blurring that tends to occur in the periphery of astrophotography significantly improved.

Color blur around blown-out highlights is also accurately corrected.

A 3-in-1 deep learning image processing technology that produces groundbreaking results

The image correction methods achieved by deep learning image processing techniques in the three areas of noise reduction, color interpolation, and aberration and diffraction correction can perform better corrections when applied in combination, rather than when performed alone, thus greatly improving the finer details of image quality and dimensionality. For example, if the image data contains noise, aberration and diffraction correction is not effective in the finer details of the image. But when properly combined with noise reduction method, the performance of aberration and diffraction correction is maximized.

Accomplishing this required the development of algorithms to optimize the combination and order of the technologies. It is no exaggeration to say that Canon was uniquely positioned to achieve this image correction technology because its related departments ,such as camera development, lens design, image quality evaluation and product implementation are able to work closely together using actual equipment and products.

In the past, there were challenges that made it difficult to capture a photo exactly as you imagined it.

For example, when shooting night scenes, if you open up the lens aperture so that more light can be captured, the aberration reduces resolution, and if you narrow the aperture in response, the amount of light that can be captured decreases, forcing you to increase the ISO sensitivity, which in turn increases the amount of noise. Furthermore, when taking a picture of a landscape that is in focus all the way from the foreground to the background, if you narrow the aperture to increase the depth of focus, the diffraction blur will impair sharpness. But if you try to avoid this diffraction blur, you will not be able to expand the area of focus.

Canon's deep learning image processing technology enables image correction processing that was previously impossible, as well as significant reduction of aberrations, noise and moire.

This has greatly expanded the range of shooting scenarios and types of photographic expression available to users. Now, there are more situations where the user can shoot in exactly the setting they want without lowering the ISO sensitivity for fear of image quality degradation. It's easier than ever to recreate the "scene as it is" in fine detail.

Canon will continue to evolve its image processing technology to provide users with a shooting experience that will bring them joy.

By realizing synergy through the right combination of three methods of image correction, Canon has made possible a photographic representation with significantly less aberrations and noise, a feat that was previously impossible.

Related article

Please see this document for detailed technical explanation.

PDF download

(PDF/8.84MB)

Please experience deep learning image processing.

Neural network Image Processing Tool